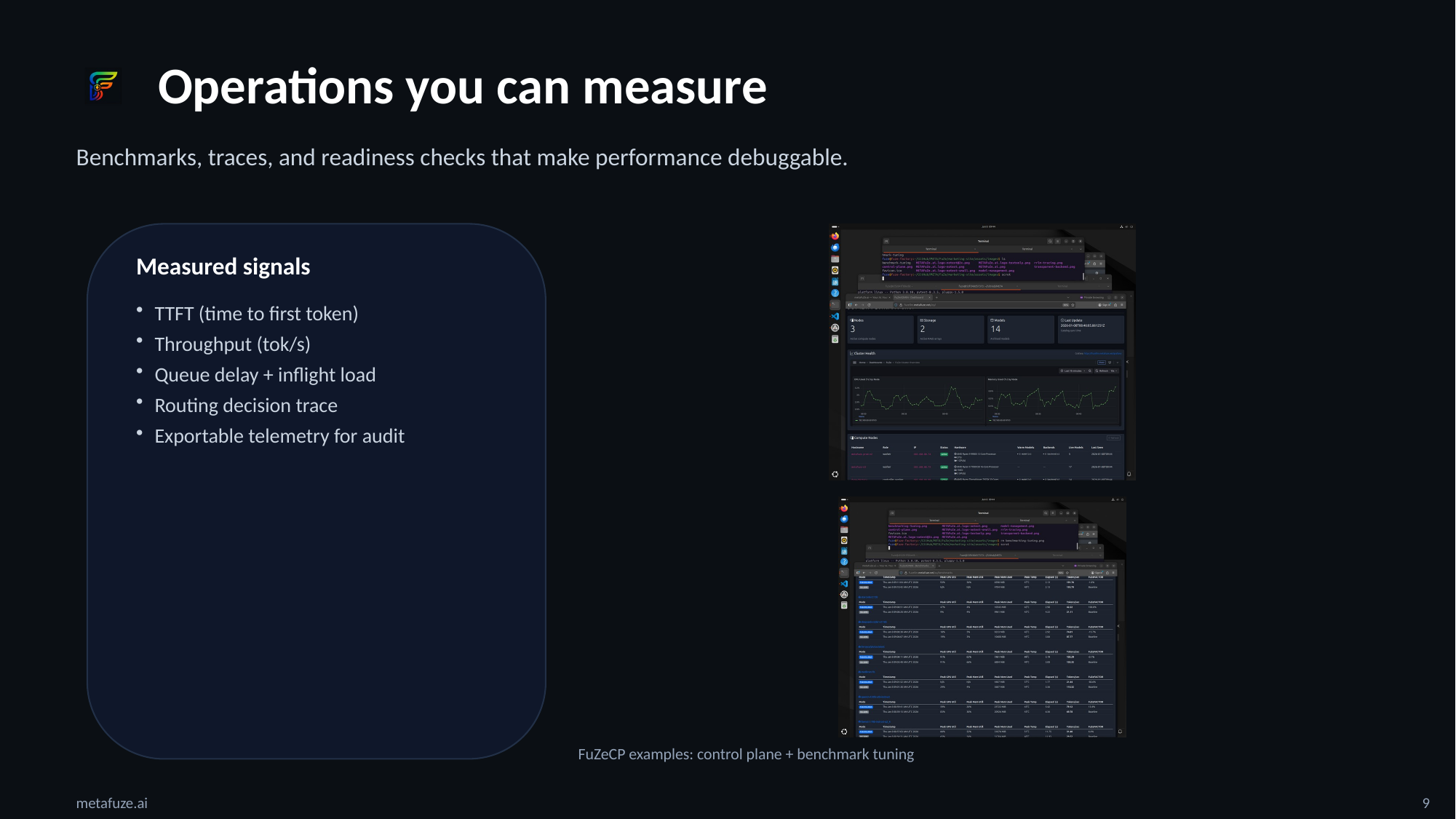

Reviewable by design

Benchmarks and readiness

Benchmarks, readiness gates, and architecture you can review.

This page is a quick tour of what exists today: benchmark harnesses, readiness gates, and the perimeter-first control model. No hand-wavy numbers, just concrete capabilities you can inspect.

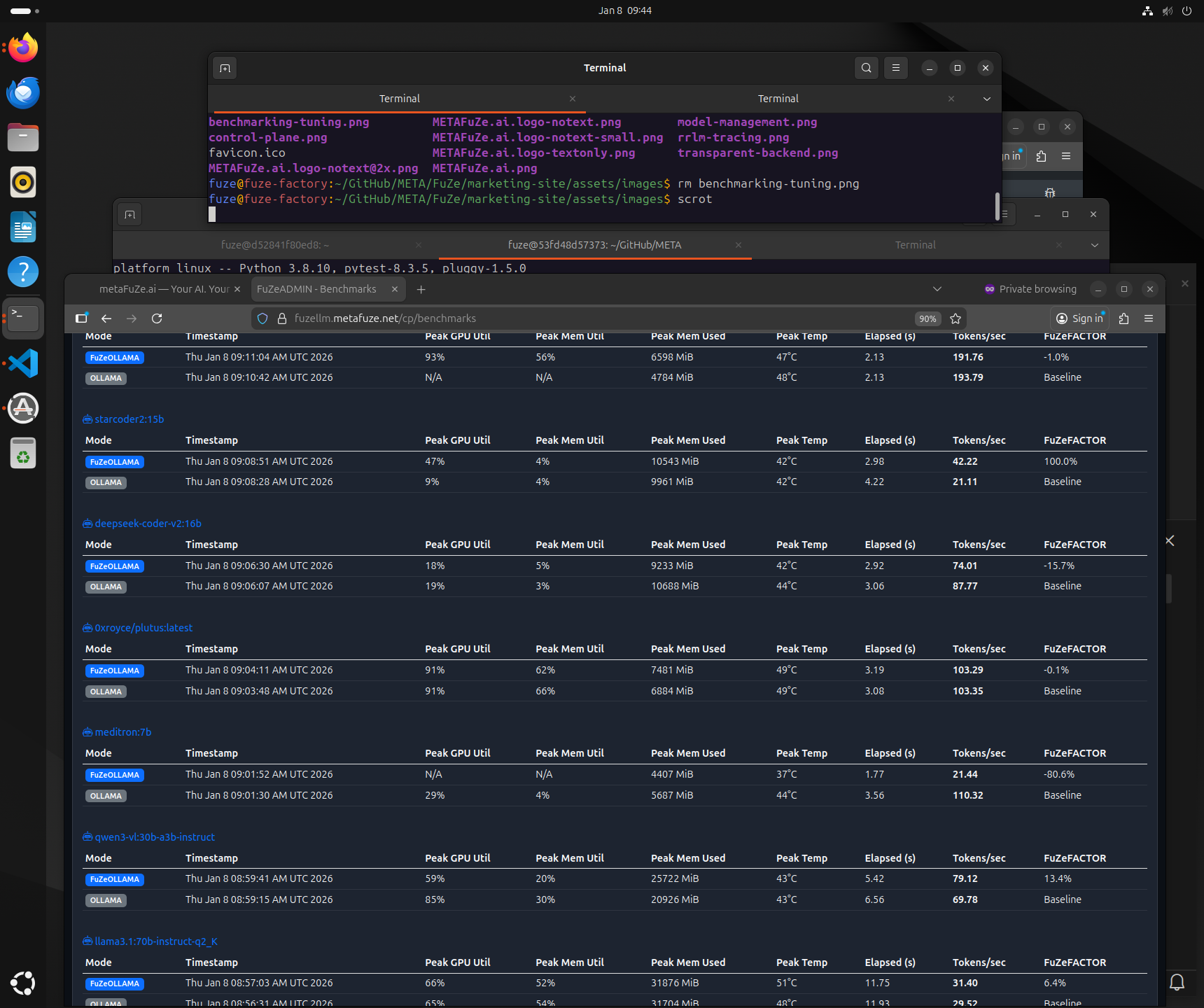

Benchmarks and performance discipline

- • Storage and data-path benchmarking to validate throughput under realistic conditions.

- • Inference harnesses for latency and throughput regression detection under load.

- • Tuning workflow to identify bottlenecks and validate improvements with repeatable runs.

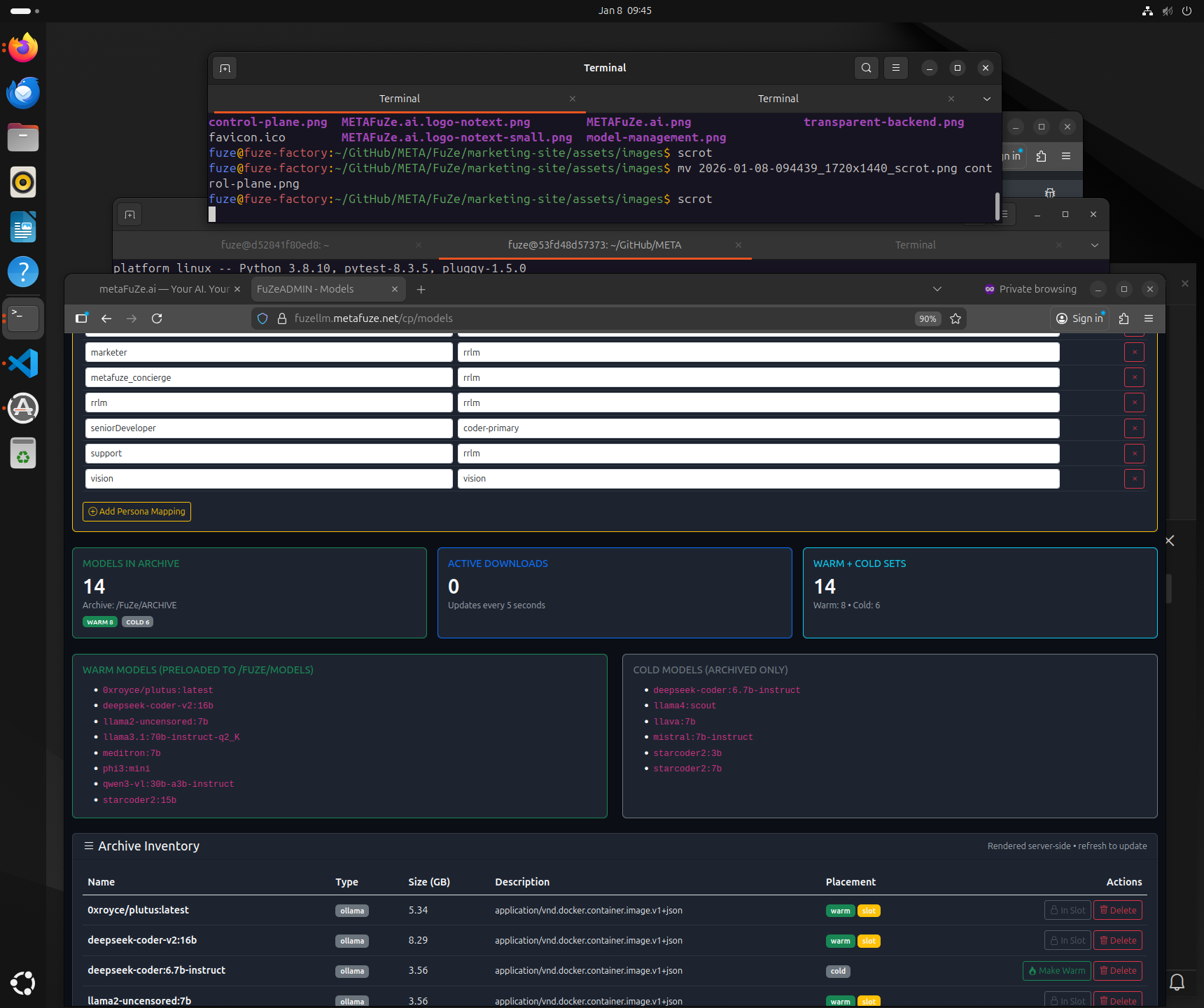

Examples from FuZeCP are shown below.

Production readiness

- • Readiness checks before services report ready, CUDA availability, tokenizer sanity, configuration loading, and tensor loader probes.

- • Cache-awareness and correctness testing plans to prevent silent degradation.

- • Guardrails, observability hooks, and incident learning loops for diagnosable behavior.

Designed to keep production behavior predictable and diagnosable.

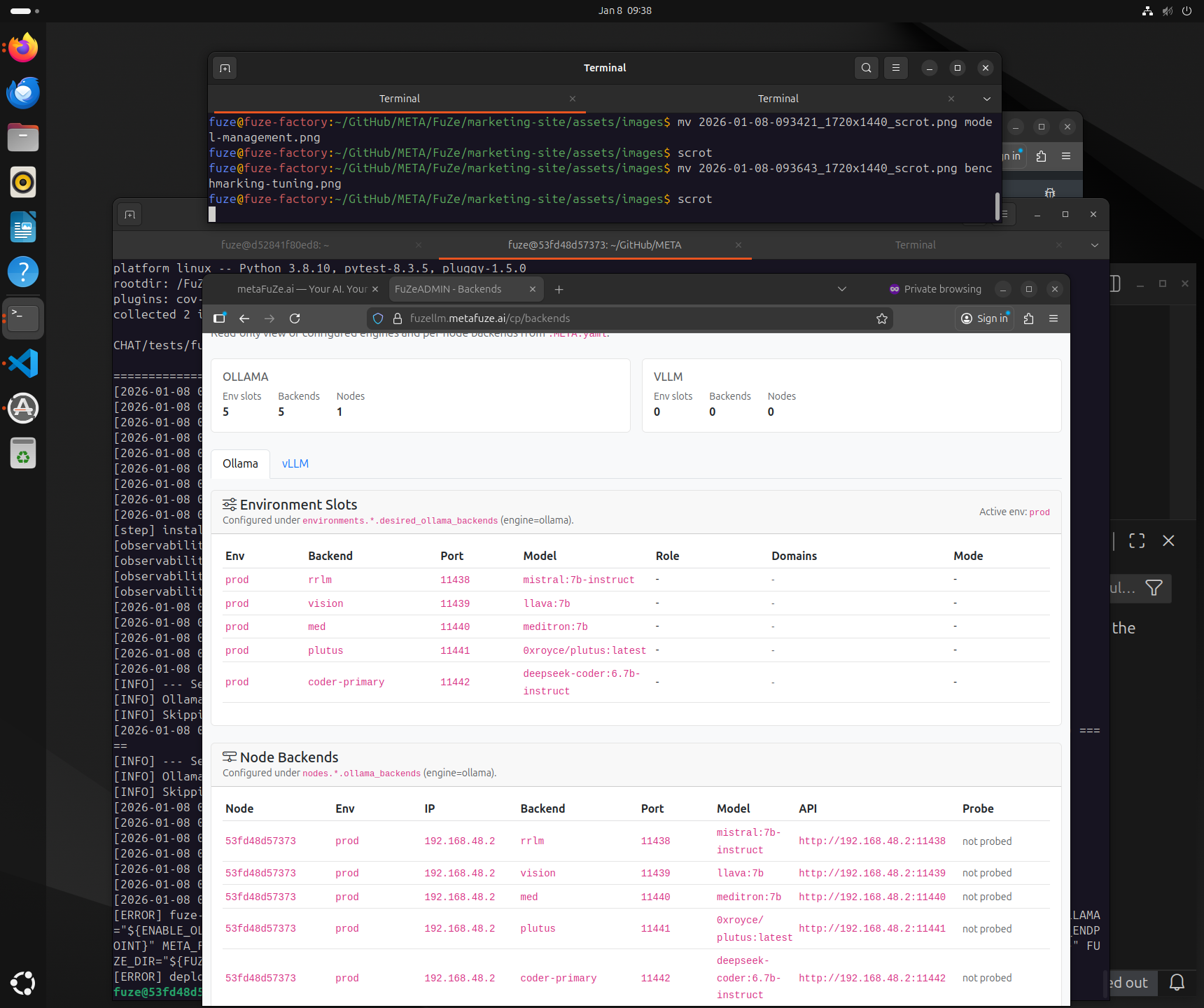

Perimeter-first control model

Tenancy

Single-tenant FuZeCLOUD or on-prem FuZeBOX. No shared control plane.

Adaptive routing

RRLM routing optimizes quality, latency, and cost, with an optional Q-learning policy loop.

RBAC context

Context assembly ties into RBAC so retrieval and memory are policy-correct per user and role.

Audit

Telemetry and audit trails stay inside the customer boundary by default.